The protection of sensitive information, adherence to compliance standards and the fortification against dynamic threats like ransomware have become non-negotiable requirements. The need for robust backup practices isn’t a choice..

Veeam Backup & Replication version 12.1 comes with new object storage capabilities, including the extension of the Archive Tier to on-premises storage solutions compatible with Amazon S3 Glacier.

Optimizing your data storage hardware is essential to getting the most out of your data. Even durable media like tape requires organizations to migrate data over time as new technology emerges with faster performance and higher capacities.

The exponential increase in data generation and storage has been outlined in numerous global studies, with some indicating data growth rates as high as 35% per annum. This proliferation of data has necessitated effective and efficient data management strategies. When it comes to handling growing data, one key aspect of data management has been archiving, which involves preserving data in a long-term storage system for future use. Not surprisingly, a recent report by Solutions North Consulting has stipulated that there will be up to six times more data stored in data archives than in primary storage by 2030.[1] Modern digital archive technology brings about notable advancements in data management.

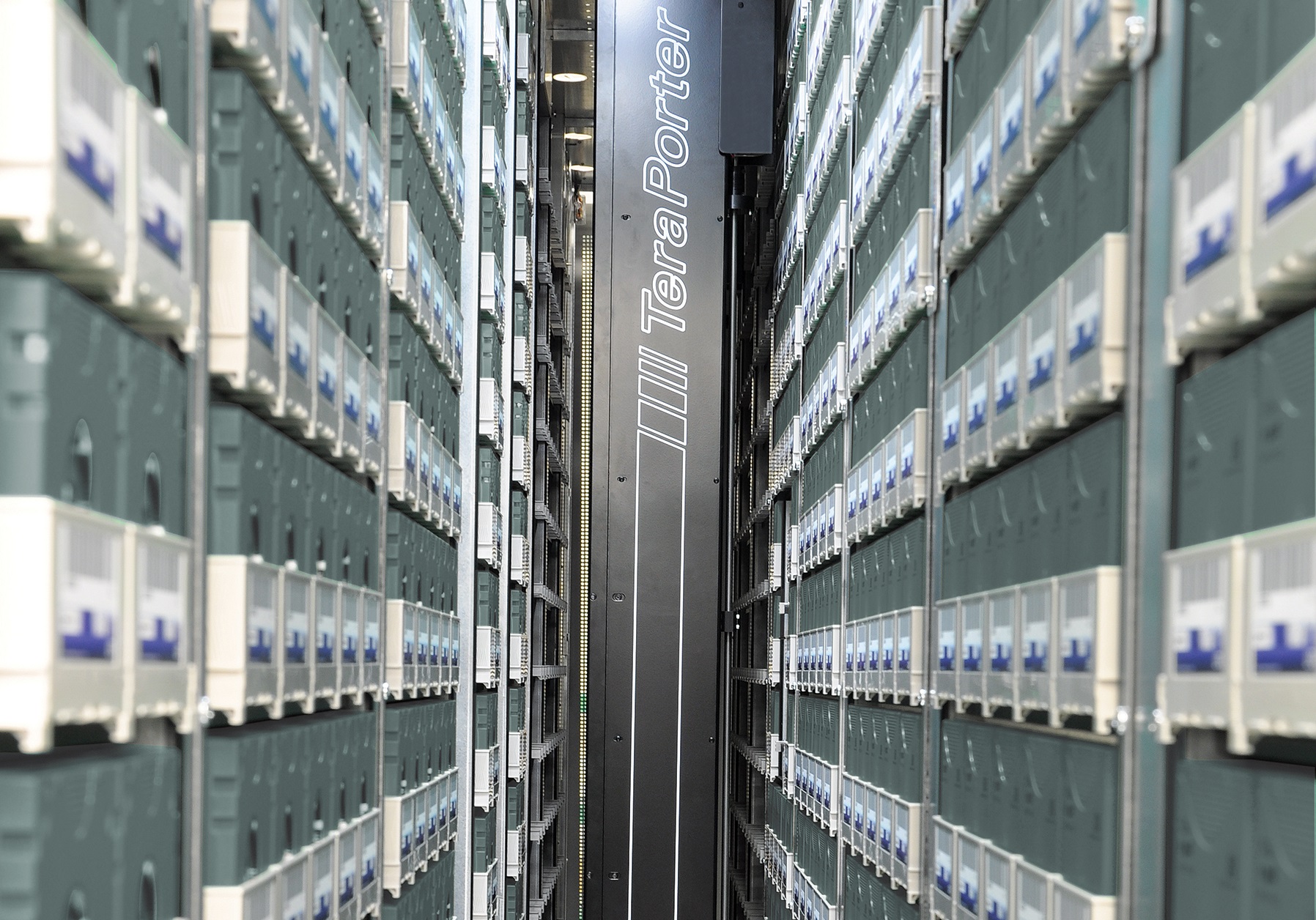

Oracle tape libraries have historically offered reliable, high-capacity storage for enterprises across various industries. However, as time and technology have progressed, these libraries have become less adaptable to organizations’ evolving data storage needs.

Cloud storage is not a one-size-fits-all solution. Organizations that have rushed to offload storage workloads to the cloud have encountered unexpected challenges and costs. Companies are reevaluating their cloud storage…

Tape has been a reliable and cost-effective way to store data that needs to be preserved for decades. As the storage industry has embraced new interfaces, such as object storage, the question comes up, “Is tape storage still relevant?”

Organizations wishing to optimize their data storage environments for backup and archive are drawn to the scalability and simplicity of object storage. Spectra has led the way in bringing this dynamic interface to the world of tape automation. Combining the flexibility of object storage with the reliability, security, and cost-effectiveness of tape technology is a true game changer in the world of long-term storage.

By Matt Ninesling Director of Hardware Engineering, Spectra Logic Tape technology has long been a reliable choice for data storage and backup, but how do you know when it’s time…

By Matt NineslingDirector of Hardware Engineering, Spectra Logic Have you asked yourself… How much time do you spend recalibrating new tape cartridges or initializing new LTO-9 media? How many times…

Object storage is becoming the de facto standard for data movement and management of long-term storage. Commonly used in cloud storage, popular object storage solutions include Amazon S3, offered by…